The potential for vision-based interfaces is incredibly broad across consumer, enterprise, industrial, medical, transportation, military, and other industry verticals. One subsegment of consumer technology devices that consumers interact with via touchscreens or tactile input reveals the scope of this opportunity. Popular devices that utilize touch-centric interaction include smartphones, tablets, smart TVs, and laptops.

Currently, U.S. households have an average of 16 connected devices, including 11 from the mature CE category, three from smart homes, and two from the connected health category.

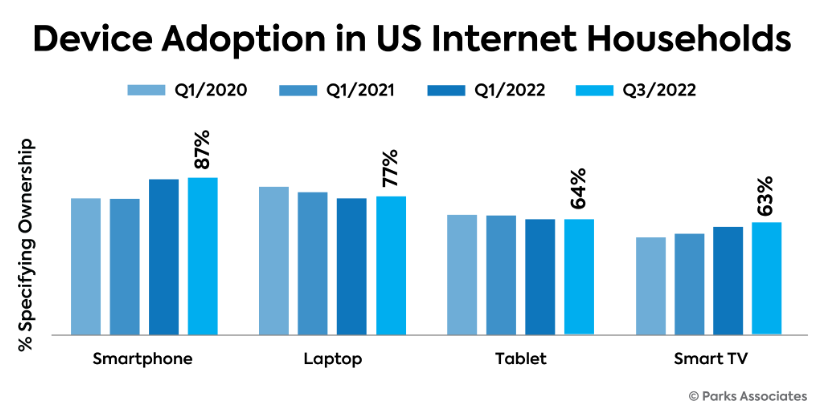

The smartphone is the most common of all touch-based devices and a focal point of human-technology interaction. Smartphones are present in 87% of US internet households.

Previous attempts at vision-based interaction in the smartphone industry have been unsuccessful, relying upon either limited computer vision capabilities based on the smartphone’s processor and cameras, eye-tracking on tablets and smartphones, or the use of near-field sensors. Google Glass is the most prominent example of a vision-based product that arrived with lots of hype but ultimately failed. Consumers’ lack of interest stemmed from a variety of reasons, including high cost, poor UI/UX design, low battery life, and lack of substantive tangible improvement over legacy touch interaction. Some smartphones continue to employ the front camera for attention tracking, but the industry overall remains focused on touch as the main interaction modality for smartphones.

In terms of control and interaction, tablet dynamics are nearly identical to those of smartphones but with an even larger display and touch input area. Like smartphones, there is a limited amount of non-touch interaction possible today, primarily via voice assistants. Tablets are in 64 percent of U.S. internet households.

Smart TV manufacturers are constantly battling the pressure of commoditization, and the control and user interfaces are a constant area of focus and attempted differentiation. Smart TVs are now in 63 percent of internet households.

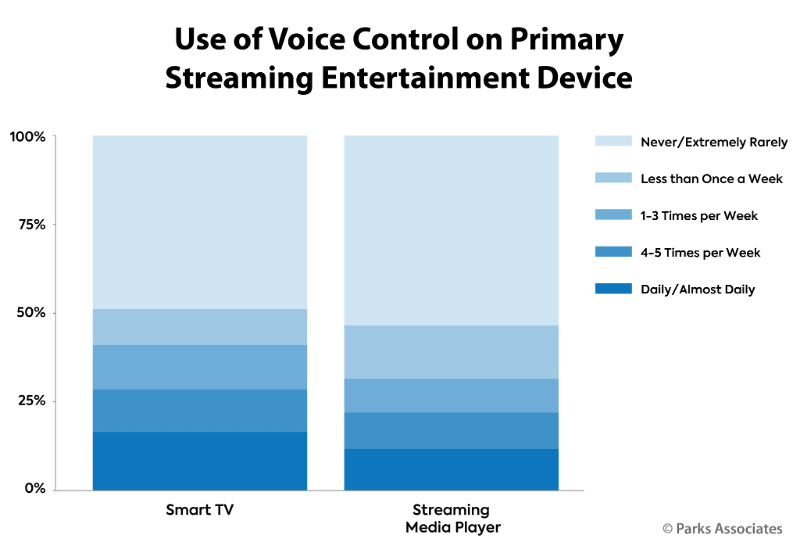

While control of the TV has evolved some, the dominant control method is still through the device’s remote control or a remote-control app on a mobile device. Consumers can use voice input to control the TV, through built-in microphones or smart speakers, but this method is merely a supplement to what remains primarily a manual control paradigm. For the top streaming video products—smart TV and streaming media players—voice control is not used frequently. 17 percent of smart TV owners use voice daily for control of the video experience.

Laptops largely rely upon two principal methods of input – the keyboard and touchpad, or an attached mouse. Some models have augmented these methods with touchscreen, cameras, fingerprint sensors, and microphones, however, these devices remain principally bound to tactile methods of input. Laptops are in 77 percent of U.S. internet households.

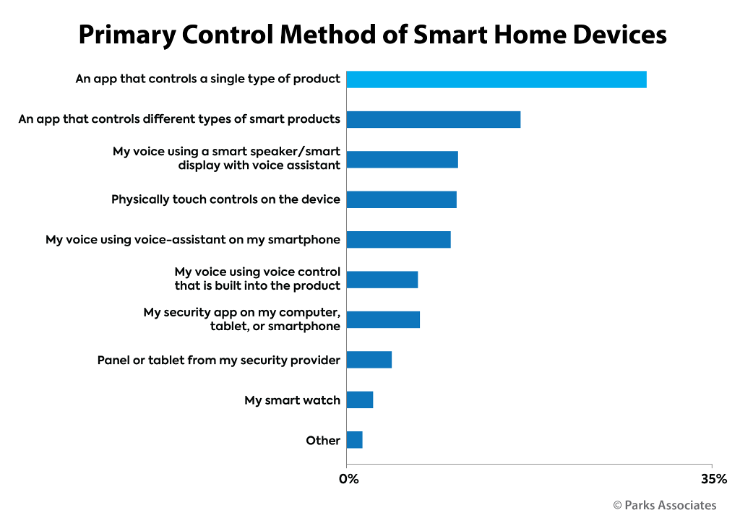

38 percent of U.S. Internet households own at least one smart home device, like a smart thermostat, smart door lock, video doorbell, or smart light bulb. Dedicated apps are the primary control method among smart home device owners, but 28 percent say voice is their primary method of control via the device’s native microphones and processing ability, or when issuing vocal commands to their smartphone, computer, tablet, or smart speaker (potentially with the help of a voice assistant). Voice is particularly popular with light bulbs and plugs where users may be operating devices while seated or with their hands full.

As noted, anything with a camera is a candidate for Vision-Based Interaction (VBI). Some security systems use embedded video to enhance the user experience, with facial recognition able to identify a household member versus a visitor versus an intruder. This could be an area in the smart home ecosystem where VBI could make early inroads, such as giving an identified head of household the ability to disarm or alert a system via specific eye movements or gaze.

13 percent of U.S. Internet households own a VR headset in 2022, double the adoption rate from 2019. VR systems require multiple forms of input and positioning data to properly render a user in the virtual space. Accelerometers, gyroscopes, and magnetometers help to measure a person’s rotational movement in space. Some systems also use special markers and depth-sensing cameras to obtain positional data. Accessories like hand controllers and gloves may provide additional input. Finally, eye tracking serves the purpose of aiming and navigating inside the VR headset display. Eye trackers measure both eye movement and the point in the distance at which the headset wearer’s eye is focused (gaze point).

These devices are only a subset of the potential number of consumer technology devices that can benefit from vision-based interactions as a substitute for or augmentation of their existing touch-based input interactions. Their high level of adoption within society today is a hint of how broadly vision-based interaction capabilities can potentially make their way into consumers’ everyday interactions with commonly accepted technology devices.

Vision-based interaction can be applied to these common devices and potentially augment and/or replace common interface modalities.

This is an excerpt from Parks Associates‘ complimentary White Paper “Vision-Based Technology: Next-Gen Control,” in collaboration with Adeia. The whitepaper examines these and other potential use cases where vision-based interfaces would augment the consumer experience and enhance their ability to interact and control their devices. Download now to explore vision-based solutions that could improve and simplify the consumer experience in navigating and selecting content on this device.